On April 2nd, in the latest installment of the "Business Security Seminar," Dingxiang's data scientist, Yilong, and senior solution expert, Fengyu, delved into the current highly scrutinized AI threats and facial recognition risks, providing in-depth insights and detailed introductions to the latest anti-fraud technologies and security products aimed at combating AI threats.

AI Threats: Posing New Challenges for Enterprises

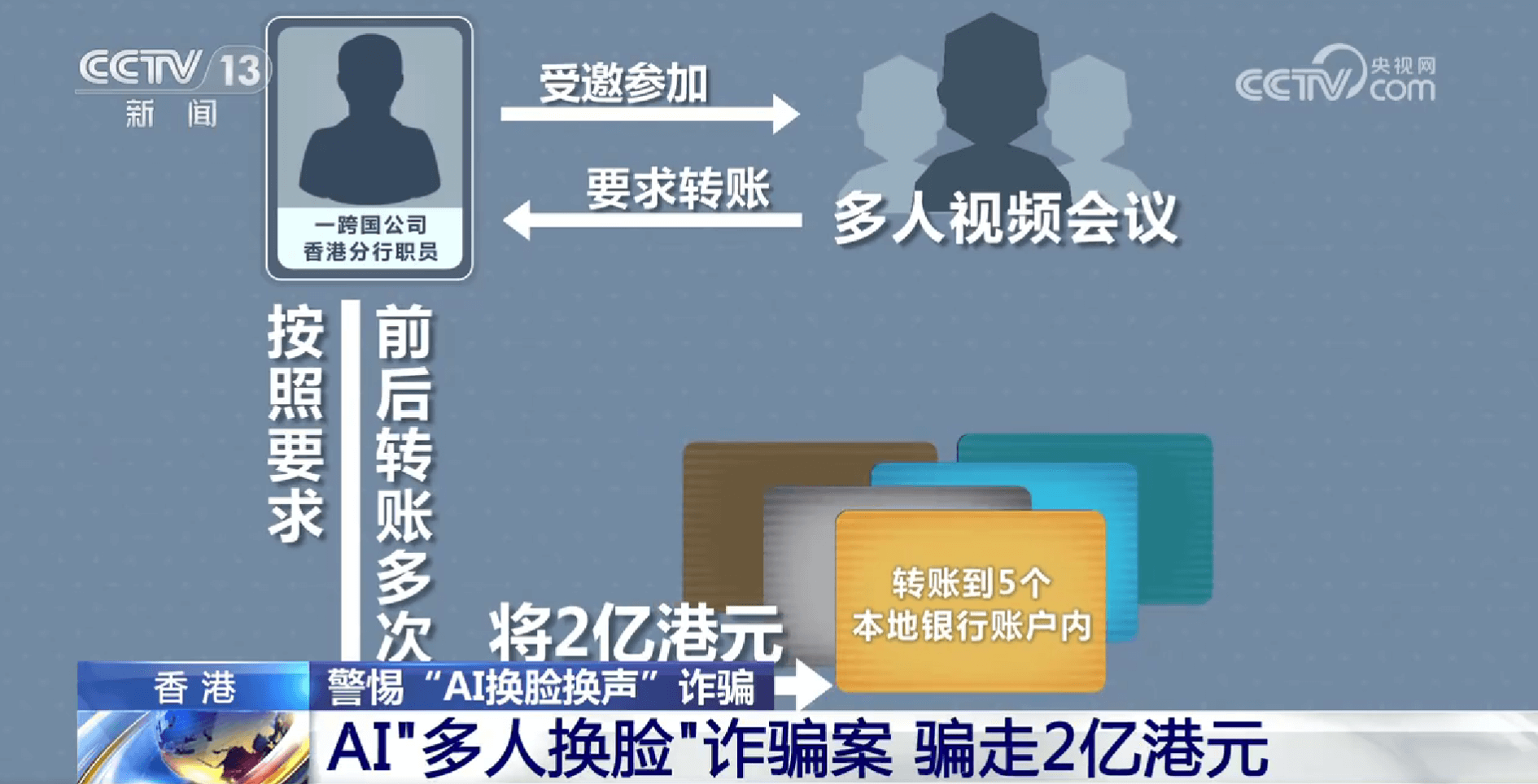

In November 2023, fraudsters disguised themselves as a close friend of Mr. Guo from a certain tech company, swindling 4.3 million RMB. In December 2023, parents of a student studying abroad received a ransom video claiming their child was kidnapped, demanding a ransom of 5 million RMB. In January 2024, 19 multinational corporation employees in Hong Kong fell victim to phishing scams, resulting in a loss of 200 million HKD. In February 2024, the AI virus "GoldPickaxe" emerged, capable of stealing facial information and transferring users' bank balances...

With the widespread application of AI, its associated security threats are increasingly drawing attention and rapidly becoming a new modus operandi, posing unprecedented risks to both enterprises and individual users. Data indicates that in 2023, fraud incidents based on "DEEPFAKE" increased by 3000%, while the number of AI-generated phishing emails grew by 1000%.

Dingxiang's Defense Cloud Business Security Intelligence Center has released the "DEEPFAKE Threat Research and Security Strategy" intelligence bulletin, which outlines that attackers can utilize AI to fabricate text, images, videos, and audio, creating realistic fake photos, videos, identification documents, financial accounts, statistical data, as well as generating new types of Trojan viruses, cracking account passwords, and launching automated cyber attacks. By combining AI-fabricated false content with elements of real information, attackers can spread rumors, impersonate acquaintances for telecommunications fraud, apply for bank loans under false pretenses, impersonate others for job interviews and employment, mimic logins to steal bank balances, and engage in phishing fraud.

Through a range of media platforms such as social media, email, remote meetings, online recruitment, and news outlets, and with the aid of emulators, device alteration software, IP spoofing, registration generators, and group control tools, fraudsters can conduct various types of scams.

Defending Against AI Attacks Requires New Security Strategies

Enterprises will need to strengthen digital identity recognition, review account access permissions, and minimize data collection. Additionally, there's a need to enhance employee awareness regarding the detection of AI threats.

Multi-channel, comprehensive, multi-stage security system. Covering various platform channels and business scenarios, providing security services such as threat perception, security protection, data accumulation, model construction, and strategy sharing, capable of meeting different business scenarios, possessing industry-specific strategies, and being able to accumulate and evolve based on their own business characteristics, achieving precise platform control. This involves deploying multiple security solutions at different points in the network to protect against various threats, devising comprehensive incident response plans to effectively and swiftly respond to AI attacks, and implementing personalized protection.

AI-driven security tool system.

Anti-fraud systems that combine manual review with AI technology to enhance automation and efficiency in detecting and responding to AI-based cyber attacks.

Enhanced identity authentication and protection.

This includes enabling multi-factor authentication, encrypting data at rest and in transit, and implementing firewalls. Strengthening frequent verification for activities such as account login from remote locations, device changes, phone number changes, and sudden activity on dormant accounts, with persistent checks to ensure consistency of user identity during usage. Comparing and identifying device information, geographic location, and behavioral operations to detect and prevent abnormal activities.

Principle of least privilege for account authorization.

Restricting access to sensitive systems and accounts based on the principle of least privilege to ensure access to resources required by their roles, thereby reducing the potential impact of account hijacking and preventing unauthorized access to your systems and data.

Continuous understanding of the latest technology and threats.

Keeping abreast of the latest developments in AI technology to adjust security measures accordingly. Continuous research, development, and updating of AI models are crucial to maintaining a leading position in the increasingly complex security landscape.

Continuous security education and training for employees.

Ongoing training on AI technology and its associated risks, using methods such as simulated attacks, vulnerability discovery, and security training to help employees identify and avoid AI attacks and other social engineering risks. Maintaining vigilance and promptly reporting anomalies significantly enhances an organization's ability to detect and respond to deepfake threats.

To mitigate AI-based threats, it's necessary to effectively identify and detect AI threats while also preventing the exploitation and proliferation of AI fraud. This requires not only technological countermeasures but also complex psychological warfare and the enhancement of public safety awareness.

Dingxiang: Full Product Line Upgrade to Combat AI Threats

In this live broadcast, Dingxiang's data scientist, Yilong, and senior solution expert, Fengyu, provided detailed insights into Dingxiang's latest upgraded anti-fraud technology and security products, aimed at systematically combating new threats posed by AI: adaptive obfuscation strengthening for iOS, seamless verification capable of generating infinite images, device fingerprinting supporting unified device information across platforms, Dinsight risk control engine capable of intercepting complex attacks and uncovering potential threats, and Dingxiang's comprehensive panoramic facial security threat perception solution tailored to address "DEEPFAKE" risks. These enhancements aim to establish a multi-channel, comprehensive, multi-stage security system for enterprises.

Safeguarding App Security to Prevent Automated AI Hacking.

ing. Dingxiang's adaptive iOS reinforcement, based on graph neural network technology, analyzes and extracts code features to automatically select appropriate obfuscation methods based on different code blocks, significantly increasing the difficulty of reverse engineering and reducing computational performance consumption by 50%. Through encryption and obfuscation engines, App code is encrypted, obfuscated, and compressed to greatly enhance code security, effectively preventing attacks such as cracking, duplication, and repackaging.

Ensuring Account Security to Prevent Malicious AI Registration and Login Attempts.

Dingxiang's atbCAPTCHA, based on AIGC technology, prevents AI brute force attacks, automated attacks, and phishing attacks, effectively preventing unauthorized access, account hijacking, and malicious operations, thus protecting system stability. It integrates 13 verification methods and multiple prevention and control strategies, aggregating 4380 risk policies, 112 types of risk intelligence, covering 24 industries and 118 risk types. With a precision control rate of up to 99.9%, it enables rapid transformation from risk to intelligence. It also supports seamless verification for secure users, with real-time response and disposition capabilities within 60 seconds, further improving the convenience and efficiency of digital login services.

Identifying AI-fabricated Devices to Prevent Malicious AI Frauds.

Dingxiang Device Fingerprinting generates unified and unique device fingerprints for each device by integrating internal information from multiple devices. It builds multi-dimensional recognition strategy models based on device, environment, and behavior to identify risks such as virtual machines, proxy servers, and manipulated simulators. By analyzing whether devices exhibit abnormal behaviors or deviations from user habits, it tracks and identifies fraudulent activities to help enterprises achieve unified operation of the same ID across all scenarios and channels, facilitating cross-channel risk identification and management.

Uncovering Potential Fraud Threats to Prevent Complex AI Attacks. Dingxiang Dinsight assists enterprises in risk assessment, anti-fraud analysis, and real-time monitoring to enhance risk control efficiency and accuracy. Dinsight's average processing speed for daily risk control strategies is less than 100 milliseconds, supporting configurable access and accumulation of data from multiple sources. It utilizes mature metrics, strategies, and model experience reserves, along with deep learning technology, to achieve self-performance monitoring and iterative risk control mechanisms. Paired with the Xintell intelligent model platform, it automatically optimizes security policies for known risks and configures different scene support risk control strategies based on risk control logs and potential risk data mining. With its standardized data processing, mining, and machine learning processes based on associative networks and deep learning technology, it provides a one-stop modeling service from data processing, feature derivation, model construction to final model deployment.

Intercepting "DEEPFAKE" Attacks to Ensure Facial Application Security.

Dingxiang's comprehensive panoramic facial security threat perception solution intelligently verifies multiple dimensions of information such as device environment, facial information, image authentication, user behavior, and interaction status. It rapidly identifies over 30 types of malicious attacks including injection attacks, live forgery, image forgery, camera hijacking, debugging risks, memory tampering, Root/jailbreak, malicious Rom, simulators, and system operation risks. Upon detecting forged videos, fake facial images, or abnormal interactions, it automatically blocks operations. It can flexibly configure video verification strength and user-friendliness to achieve seamless verification for normal users and dynamically strengthen verification for abnormal users.

By conducting comprehensive risk prevention and control measures before, during, and after incidents, Dingxiang aims to prevent AI threats that have formed industrialized and mature attack patterns.